Generative AI for Enterprise: How to Move Beyond ChatGPT to Built-In Company Value

Alexander Stasiak

Feb 27, 2026・12 min read

Table of Content

Introduction: From ChatGPT Demos to Real Enterprise Value

The Evolution: From Public Chatbots to Enterprise-Grade Generative AI

Why Enterprises Must Move Beyond Standalone ChatGPT

From Interaction to Integration: Embedding Generative AI in Core Workflows

Designing AI-First Business Processes

Building Domain-Specific Intelligence: RAG, Fine-Tuning, and Private Models

Defining and Curating High-Value Enterprise Data

Guardrails, Policies, and Human-in-the-Loop

High-Impact Enterprise Use Cases Beyond ChatGPT

Product, Engineering, and R&D

Operations, Supply Chain, and Manufacturing

Finance, Risk, and Compliance

Customer Experience and Revenue Teams

A Practical Roadmap: From Pilot to Platform

Prioritizing Use Cases and Measuring ROI

Technology, Talent, and Operating Model

Risk, Compliance, and Responsible Adoption

Human Oversight and Change Management

Conclusion: Making Generative AI a Built-In Advantage

Introduction: From ChatGPT Demos to Real Enterprise Value

When ChatGPT launched in November 2022, it sparked an explosion of enterprise experimentation. By early 2023, nearly every company had teams playing with the tool—drafting marketing copy, summarizing documents, and generating email responses. Fast forward to 2025, and most organizations are still stuck in that same experimental phase. They have chatbots. They have content creation tools. But they don’t have measurable business value flowing to the bottom line.

The problem isn’t the technology. It’s the approach. Most companies treat generative AI as a bolt-on tool—a separate chat window employees open occasionally—rather than a built-in capability woven into core business operations. By 2026, over 80% of enterprises will have generative AI APIs and models in production, fundamentally transforming how work gets done. The companies winning this race aren’t just using AI; they’re embedding it into every workflow, decision, and customer interaction.

This article will show you how to move beyond generic ChatGPT usage into embedded, measurable enterprise value. The three main levers are:

- Workflow integration: AI inside processes, apps, and decisions rather than as a separate interface

- Domain-specific intelligence: Models that understand your data, policies, and industry context

- Governance and compliance: Controls that enable confident scaling across sensitive business functions

The distinction matters. “Bolt-on AI” means employees copy-paste between ChatGPT and their actual work. “Built-in AI” means AI suggestions, automations, and actions happen inside the systems where work already lives. The former delivers incremental productivity gains. The latter delivers structural transformation.

The Evolution: From Public Chatbots to Enterprise-Grade Generative AI

The path from research curiosity to enterprise necessity happened faster than anyone predicted. GPT-2 arrived in 2019 as a research demonstration. GPT-3 in 2020 showed what large language models could do at scale. Then ChatGPT’s November 2022 launch brought generative artificial intelligence to the mainstream, reaching 100 million users faster than any application in history.

But consumer tools and enterprise deployments are fundamentally different. Public chatbots like ChatGPT, Gemini, and Claude web apps serve individual users with general knowledge. Enterprise deployments sit behind SSO, operate within VPCs, and connect to proprietary data under strict governance controls.

Here’s how the enterprise landscape evolved:

- 2023: Companies experimented with marketing copy, email drafts, and standalone chatbots for customer service

- 2024: Major platforms (Salesforce, ServiceNow, SAP, Snowflake) embedded generative AI directly into their products

- 2025: Enterprises began building AI agents that take actions—creating tickets, updating records, triggering approvals—not just generating text

- Integration became the differentiator: Companies connecting AI to CRM data, ERP transactions, and internal knowledge bases saw material ROI while others stayed stuck in pilot mode

This evolution marks the shift from “AI as a chat interface” to “AI as an invisible layer in workflows and decisions.” The chatbot phase was necessary for learning. The integration phase is where value compounds.

Why Enterprises Must Move Beyond Standalone ChatGPT

Plain ChatGPT has fundamental limitations for enterprise use. Understanding these gaps explains why business leaders are demanding more sophisticated approaches.

The core limitations include no direct access to proprietary data (ChatGPT doesn’t know your customers, contracts, or policies), limited control over data residency (sensitive information flowing to external servers), and weak alignment with internal compliance requirements out-of-the-box. For industries governed by GDPR, HIPAA, or sector-specific regulations, these aren’t minor inconveniences—they’re deployment blockers.

Executives now expect generative ai tools that deliver:

- Integration with existing data in CRM, ERP, and data warehouses

- Full auditability of AI-generated outputs and decisions

- Compliance with data privacy regulations and industry standards

- ROI tied to real business KPIs, not just “employee satisfaction with AI tools”

- Risk assessment capabilities that flag potential issues before they reach customers

Relying only on a general-purpose chatbot caps impact at individual productivity improvements. Someone drafts emails 20% faster. Another person summarizes documents more quickly. These gains matter, but they don’t change how the company operates. They don’t reduce cost structures, accelerate revenue cycles, or mitigate compliance risks at a structural level.

Here’s what “beyond ChatGPT” expectations look like in practice:

- Auto-drafting contracts that already include your company’s standard clauses and compliance language

- Generating code changes directly from support tickets, tested and ready for review

- Summarizing customer interactions by pulling full context from CRM timelines

- Producing variance analyses that reference your specific chart of accounts and business units

- Creating training materials customized to your products, policies, and procedures

Generic chat tools don’t satisfy these needs on their own. They require integration, customization, and governance infrastructure that transforms how enterprises leverage ai capabilities.

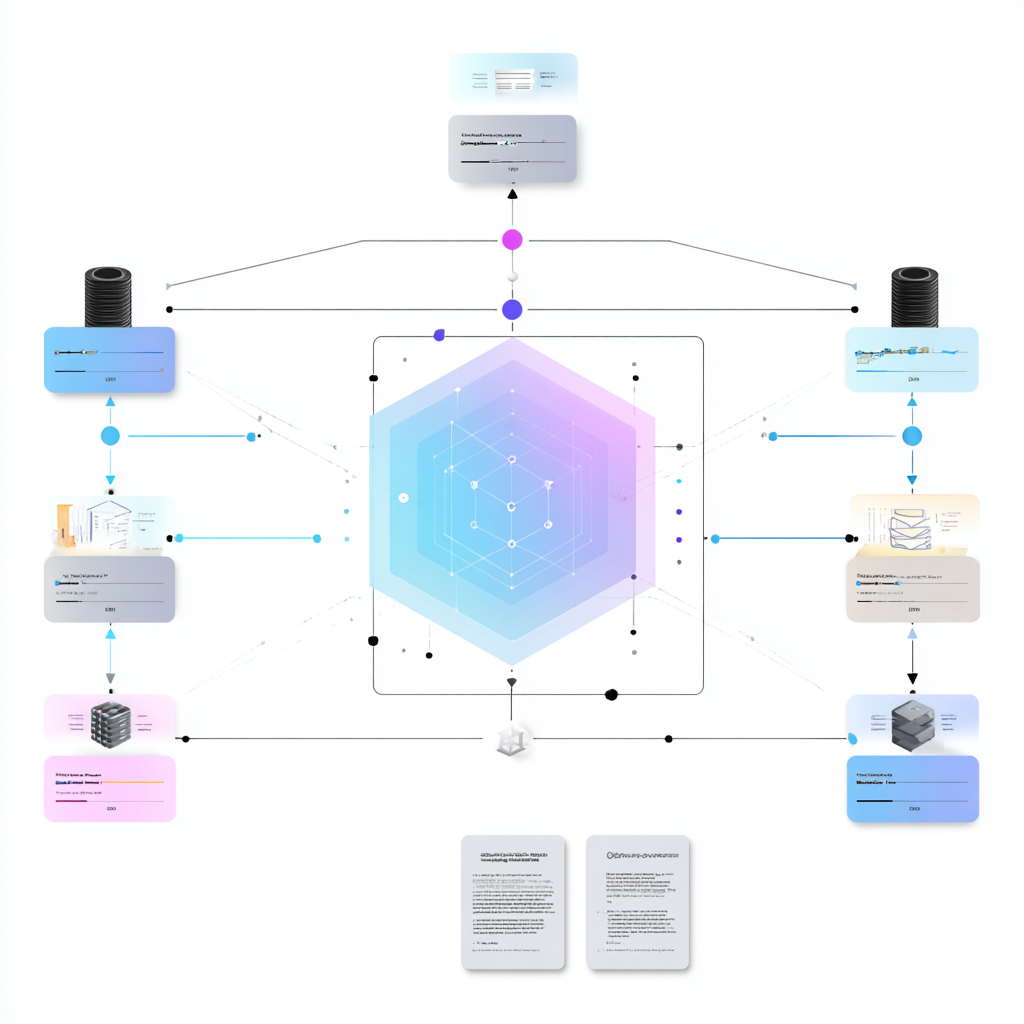

From Interaction to Integration: Embedding Generative AI in Core Workflows

Generative AI becomes valuable when it’s deeply integrated into systems where work already happens—Jira, SAP, Salesforce, Workday, ServiceNow, and internal data warehouses. The goal isn’t teaching employees to use a new AI tool. It’s making AI assistance appear automatically where and when it’s needed.

Concrete workflow examples demonstrate this principle:

- Automated RFP responses: AI drafts responses by pulling from your proposal library, case studies, and pricing templates, then routes to the right approvers

- AI-assisted financial close: Variance explanations auto-generate from transaction data, reducing time FP&A analysts spend on routine commentary

- Intelligent document processing in claims: Insurance claims are parsed, validated against policy terms, and routed with recommended actions

- Code review bots in CI/CD: Pull requests get automated review comments, security vulnerability flags, and suggested fixes before human review

- Knowledge assistants on internal wikis: Employees ask questions in natural language and get answers synthesized from HR policies, IT documentation, and operational procedures

The AI agents pattern takes this further. Instead of just returning text, agents trigger actions: create tickets, update records, send approval requests, schedule meetings. This transforms AI from a consultation tool into an active participant in business processes.

UI patterns that work for enterprise adoption:

- Side panels in existing apps (answer questions without switching context)

- Inline suggestions (AI recommendations appear as employees work)

- Background automation (AI processes documents and flags exceptions without user initiation)

This approach keeps employees in their familiar tools while augmenting their capabilities with embedded intelligence.

Designing AI-First Business Processes

Simply dropping AI into old processes under-delivers. Companies see the biggest gains when they redesign workflows with AI as a first-class participant, not an afterthought.

Consider the shift from traditional monthly reporting to continuous, AI-generated management views. In the old model, analysts spend the first two weeks of each month gathering data, building Excel models, and creating PowerPoint decks. In the AI-first model, dashboards update continuously with AI-generated narrative explanations of variances, trend analysis, and recommended actions.

Steps to redesign processes for AI include:

- Map the current process: Document every step, decision point, handoff, and approval

- Identify AI insertion points: Where can AI draft, suggest, validate, or automate?

- Define human approval gates: Which decisions require human judgment vs. AI autonomy?

- Build feedback loops: How will humans correct AI outputs to improve future performance?

- Document and train: Teams need clear guidance on when to trust and when to challenge AI outputs

A practical example: An insurance company redesigned their underwriting workflow. Previously, underwriters manually reviewed applications, pulled external data, and made decisions. In the redesigned process, AI pre-populates risk assessments, flags anomalies, and recommends pricing. Underwriters focus on exceptions and complex cases. Time spent on routine applications dropped 60%, while human oversight remained on high-risk decisions.

Change management matters as much as technology. Teams need training on new workflows, clear escalation paths, and psychological safety to report when AI makes mistakes.

Building Domain-Specific Intelligence: RAG, Fine-Tuning, and Private Models

Real enterprise value emerges when generative AI understands your company’s own data—policies, contracts, product documentation, support tickets, sensor logs, and market research. Generic foundation models trained on internet data can’t answer questions about your specific customers, products, or operations.

Retrieval-Augmented Generation (RAG) became the standard pattern between 2023-2025 for combining large language models with private data. The concept is straightforward: when a user asks a question, the system first retrieves relevant documents from your knowledge base, then passes those documents to the LLM along with the question. The model generates answers grounded in your actual data rather than its general training.

When to use different approaches:

- RAG: Best for most enterprise use cases; keeps foundation models current with your data without retraining

- Fine-tuning: Useful when you need the model to adopt specific writing styles, domain terminology, or output formats

- Domain-specific models: Required for specialized industries (healthcare, legal) where vertical large language models incorporate clinical guides, regulatory frameworks, or technical standards that general models lack

Industry examples demonstrate the pattern:

- A regional bank uses RAG over lending policies and Basel III documents, enabling loan officers to get instant answers about compliance requirements

- A pharma company connects RAG to clinical trial reports, allowing researchers to synthesize findings across studies

- A manufacturer builds RAG over maintenance manuals and sensor logs, giving technicians AI-generated troubleshooting guidance based on actual equipment history

For CIO/CTO readers: the technical stack typically includes a vector database (Pinecone, Weaviate, or similar) that stores document embeddings, an orchestration layer that manages retrieval and generation, and observability tools that track query performance and answer quality. Most enterprises can achieve significant value with RAG before considering more complex approaches like fine-tuning neural networks.

Defining and Curating High-Value Enterprise Data

The quality of AI answers is bounded by the quality and organization of your internal data. Implementing generative ai without addressing data foundations leads to disappointing results—the AI confidently generates answers based on outdated, inconsistent, or incomplete information.

Key data domains most enterprises can start with:

- Knowledge bases: Internal wikis, documentation, and how-to guides

- Past cases or tickets: Support history, incident reports, and resolution patterns

- Policies and SOPs: Compliance requirements, approval workflows, and operational procedures

- Product documentation: Specifications, user guides, and technical references

- Financial and operational reports: Historical analyses, board materials, and planning documents

Data governance requirements for AI readiness:

- Access controls: AI should only retrieve documents users are authorized to see

- PII redaction: Personal information must be masked or excluded from training data

- Versioning: AI needs access to current documents, not outdated versions

- Metadata tagging: Rich metadata improves retrieval accuracy and enables filtering

Practical advice: Plan a 90-day data cleanup effort before broad AI rollout. Identify your highest-value knowledge sources, audit them for quality and currency, establish ownership and update processes, and implement the right data governance controls. Data scientists and engineers should partner with business teams who understand which documents matter most.

Tools that support this work include data catalogs for discovery, vector databases for semantic search, and DLP solutions for privacy protection. Stay tool-agnostic during planning—focus on requirements first, then select technology.

Guardrails, Policies, and Human-in-the-Loop

Enterprises must implement guardrails that prevent AI from generating harmful, inaccurate, or non-compliant outputs. This isn’t about limiting AI capabilities—it’s about enabling confident deployment across more sensitive use cases.

Essential guardrail categories:

- Content filters: Block generation of inappropriate, offensive, or dangerous content

- Policy-based access: Different users get different AI capabilities based on role and authorization

- Prompt injection protection: Prevent users from manipulating AI to bypass controls

- Output validation: Automated checks that flag potential hallucinations or policy violations

- Red-teaming exercises: Regular testing to identify vulnerabilities before bad actors do

High-risk areas requiring mandatory human oversight:

- Medical advice and clinical recommendations

- Financial recommendations affecting customer accounts

- HR decisions involving hiring, compensation, or termination

- Legal guidance with contractual or liability implications

- Any decision with significant ethical considerations

Create an internal “AI usage policy” covering:

- What data can and cannot be used with AI tools

- Acceptable and prohibited use cases by department

- Escalation paths when AI outputs seem wrong or concerning

- Retention and audit requirements for AI-generated content

- Training requirements before employees gain AI access

Consider forming an AI safety review board or steering committee that meets quarterly to review incidents, update policies, and approve expansion into new use cases. This governance structure enables faster scaling because decisions don’t bottleneck on individual executives.

High-Impact Enterprise Use Cases Beyond ChatGPT

Moving beyond chatgpt means shifting from generic chat use cases to end-to-end, cross-functional transformations. The use cases that deliver measurable business value share common characteristics: they automate actions (not just generate text), they integrate with systems of record, and they leverage ai systems to make predictions or recommendations that humans act on.

Research indicates quantifiable impacts across use case categories: 10-15% R&D expense savings, 20-40% productivity improvements in operations, 30-40% faster AI performance with proper data foundations. These metrics come from enterprises that moved beyond experimentation into production deployments integrated with core business operations.

The following use case clusters demonstrate what’s possible for mid-size enterprises—regional banks, manufacturing companies, insurers, and technology providers—not just Big Tech.

Product, Engineering, and R&D

AI-assisted software development has matured rapidly. Today’s generative ai solutions go far beyond autocomplete:

- Requirements to code: Natural language descriptions transform into code suggestions with appropriate error handling and documentation

- Automated test generation: AI creates test cases based on code changes, improving coverage without manual test writing

- CI/CD integration: AI code reviewers flag security vulnerabilities, performance issues, and style violations before human review

- Drug discovery acceleration: Pharma companies use generative AI to identify molecular candidates, reducing early-stage R&D timelines

Generative design in hardware and manufacturing includes auto-generating CAD variations optimized for weight, cost, and manufacturability. Engineers review AI-generated options rather than creating each variation manually.

Measurable KPIs from production deployments:

- 30-50% reduction in bug-fix cycle time

- 2x improvement in deployment frequency

- 40% reduction in time spent on code review

- 25% faster time-to-market for new features

Operations, Supply Chain, and Manufacturing

Generative AI transforms operations through scenario planning, automated playbook generation, and predictive maintenance integration. Supply chains represent a particularly high-impact domain.

A global manufacturer implemented AI-generated maintenance instructions aligned with IoT sensor alerts. When sensors detect anomalies, AI generates troubleshooting steps referencing the specific equipment model, maintenance history, and parts availability. Results: 35% reduction in unplanned downtime, 20% improvement in first-time fix rates.

Operational use cases with proven impact:

- Demand forecasting narratives: AI explains forecast changes, identifies drivers, and recommends inventory adjustments. Companies report 10-15% better forecast accuracy leading to lower inventory days.

- Disruption playbooks: When supply chains face port closures, supplier failures, or logistics delays, AI auto-generates response playbooks based on past incidents and current constraints.

- Quality control augmentation: AI analyzes inspection images, flags defects, and generates quality reports, reducing inspection time while improving detection rates.

The value lever in operations is clear: faster decisions, fewer disruptions, and operational efficiency improvements that compound across entire industries.

Finance, Risk, and Compliance

FP&A teams traditionally spend the first two weeks of each month on manual Excel and PowerPoint work. Generative AI changes this equation dramatically.

AI-generated outputs for finance teams:

- Management reports: Variance analyses with narrative explanations, trend identification, and recommended actions

- Board packs: Executive summaries synthesized from operational data, market trends, and financial results

- Audit support: Documentation compiled and organized for auditor requests, with explanations of key transactions

Compliance automation addresses growing regulatory burden. The EU AI Act, revised GDPR guidance, and sector-specific requirements create vast amounts of new compliance work. AI summarizes new regulations, maps requirements to existing policies, and identifies gaps requiring action.

A regional bank implemented AI-generated risk summaries for large corporate exposures. AI drafts the initial assessment referencing credit data, news, and industry trends. Risk officers review and approve, focusing their expertise on judgment calls rather than data gathering. Cycle time dropped 50% while coverage improved.

Customer Experience and Revenue Teams

Capabilities driving customer experience transformation:

- Personalized responses: AI generates replies referencing purchase history, support tickets, and preference data from CRM

- Intelligent routing: Calls and messages route to the right agent with AI-generated context summaries and suggested responses

- Next-best-actions: Sales teams receive AI recommendations for upsells, follow-ups, and retention interventions based on usage patterns

A B2B SaaS company implemented AI-drafted renewal offers and QBR decks. The system analyzes product usage, support history, and customer goals to generate personalized renewal proposals. Results: 15% improvement in renewal rates, 25% reduction in time spent preparing customer materials.

Average handle time reductions of 20-30% are common when agents receive AI-generated summaries and suggested responses. NPS improvements follow as customers experience faster, more personalized service.

A Practical Roadmap: From Pilot to Platform

The path from experimentation to production follows a predictable pattern. Companies that succeed move through distinct phases with clear milestones and accountability.

Phase 1: Discovery (Months 1-3)

- Inventory current AI experiments and assess results

- Identify 3-5 high-potential use cases using scoring framework

- Establish governance policies and data privacy requirements

- Select initial technology partners and platforms

Phase 2: Pilot (Months 4-9)

- Launch 1-3 pilots with dedicated owners and executive sponsors

- First RAG use case live within 90 days of project start

- Define success metrics before launch, measure continuously

- Document learnings, build repeatable patterns

Phase 3: Expansion (Months 10-18)

- Scale successful pilots to additional teams and regions

- Build shared components (embedding pipelines, guardrails, monitoring)

- Train business champions in each function

- Enterprise AI policy approved and communicated

Phase 4: Platformization (Months 19-24)

- Establish AI platform with self-service capabilities

- Integrate AI into core enterprise systems (ERP, CRM, HR)

- Mature observability, cost management, and governance

- AI becomes standard feature of workflow design

Avoiding “pilot purgatory” requires tying each project to a business owner, defined KPIs, and executive sponsor. Pilots without clear success criteria and accountable owners tend to stall.

A mid-size insurer followed this roadmap. They started with claims document processing (Phase 1-2), expanded to underwriting assistance (Phase 3), and now have an AI platform supporting customer service, compliance, and operations (Phase 4). Total investment: $3M over 24 months. Measured savings: $12M annually in operational efficiency.

Prioritizing Use Cases and Measuring ROI

Not all use cases deserve investment. A scoring framework helps focus resources on opportunities with the best return profile.

Score each potential use case across three dimensions:

| Dimension | Factors to Consider |

|---|---|

| Impact | Revenue potential, cost savings, risk reduction, strategic importance |

| Feasibility | Data readiness, integration effort, technology maturity, talent availability |

| Risk | Regulatory requirements, reputational exposure, ethical considerations, compliance risks |

Typical ROI metrics by function:

- Support: Cost per ticket, first-contact resolution, handle time

- Sales: Time to quote, win rate, days sales outstanding

- HR: Time-to-hire, cost per hire, employee engagement

- Compliance: Review time, audit findings, regulatory penalty risk

- Operations: Error rates, cycle time, unplanned downtime

Set ambitious but achievable targets. A reasonable benchmark: prove >5x return on initial AI investment within 12-18 months, combining hard savings (headcount, vendor costs) with soft benefits (cycle time, quality improvements).

Technology, Talent, and Operating Model

The enterprise AI stack includes core components that must work together:

- LLM provider(s): Foundation models accessed via API (consider multi-model strategies for resilience)

- Orchestration layer: Manages prompts, retrieval, and model interactions

- Vector store: Enables semantic search over enterprise documents

- Observability: Tracks usage, latency, costs, and quality metrics

- Security and governance: Access controls, audit logging, content filtering

Key roles for AI success:

- Product owners: Define use cases, success metrics, and user requirements

- ML/AI engineers: Build and maintain AI systems and integrations

- Data engineers: Prepare data pipelines and maintain quality

- Prompt engineers: Optimize prompts for accuracy and efficiency

- Business champions: Drive adoption within functions, gather feedback

Form an AI center of excellence by late 2024 or early 2025. This team centralizes best practices, maintains shared components, and prevents duplication of effort across business units. They don’t own every AI project—they enable projects across the enterprise.

Risk, Compliance, and Responsible Adoption

The regulatory landscape for artificial intelligence has matured significantly. As of 2024-2025, enterprises must navigate GDPR, CCPA/CPRA, HIPAA (for healthcare), the EU AI Act, and sector-specific guidance from banking regulators and industry bodies.

Main risk categories requiring active management:

| Risk | Example | Mitigation |

|---|---|---|

| Data leakage | Sensitive information flows to external AI providers | Private deployments, data classification, access controls |

| Hallucinations | AI generates plausible but false information | RAG with verified sources, human review for high-stakes outputs |

| Bias/discrimination | AI recommendations disadvantage protected groups | Testing across demographic groups, regular audits, ethical considerations in design |

| IP/copyright issues | AI generates content infringing third-party rights | Training data provenance, output filtering, legal review processes |

| Lack of auditability | Can’t explain why AI made a recommendation | Logging, explainability tools, documented decision criteria |

Design “compliance-by-default” AI workflows with:

- Automatic logging of all AI interactions and outputs

- Required approvals for outputs in regulated domains

- Retention rules aligned with industry requirements

- Clear escalation paths when AI outputs require review

Work with legal, security, and compliance teams from day one. Early adopters who treated compliance as an afterthought faced costly remediation. Those who embedded compliance from the start scaled faster and with more confidence.

Human Oversight and Change Management

Generative AI augments expert judgment—it doesn’t replace it. This is especially critical in finance, healthcare, legal, and HR where wrong answers carry significant consequences.

Design human-in-the-loop patterns with clear thresholds:

- Full automation: Low-risk, high-volume tasks where AI accuracy is proven

- AI draft, human review: Medium-risk tasks where humans validate before action

- Human decision, AI assist: High-risk decisions where AI provides information but humans decide

- Human only: Sensitive decisions where AI involvement is inappropriate

Training programs for AI adoption should include:

- Mandatory “AI literacy” sessions for all employees (2024)

- Role-specific training on AI tools and workflows (2025)

- Regular updates as capabilities and policies evolve

- Clear guidance on when AI outputs require verification

Cultural aspects matter as much as training:

- Address fear of job loss directly—emphasize augmentation, not replacement

- Celebrate early success stories and growth opportunities they enabled

- Create safe channels for reporting AI errors without blame

- Recognize employees who identify issues that improve AI systems

Conclusion: Making Generative AI a Built-In Advantage

Moving beyond ChatGPT means embedding generative AI across systems, data, and decisions—not just offering a chat tool on the side. The companies extracting real business value have integrated AI into workflows where work already happens, built domain-specific intelligence using their own data, and established governance that enables confident scaling.

The path forward requires action on multiple fronts: define your AI strategy aligned with business goals, clean and govern your data foundations, build domain-specific intelligence through RAG and selective fine-tuning, integrate AI into core workflows where it can take actions and drive decisions, and establish responsible governance that balances innovation with oversight.

Enterprises that act in 2024-2025 to build AI into their operating model will set the competitive baseline for the rest of the decade. The transformative force of generative AI isn’t optional—the question is whether you’ll lead digital transformation in your industry or follow competitors who moved faster.

Start your first 90-day plan today:

- Pick one high-impact use case where data is ready and value is clear

- Assemble a cross-functional team with business, technology, and governance representation

- Define success metrics before you build

- Build, measure, learn, and scale

By the late 2020s, AI will be a default feature of every enterprise workflow, invisible and essential. The work you do now determines whether your organization leads that future or struggles to catch up.

Digital Transformation Strategy for Siemens Finance

Cloud-based platform for Siemens Financial Services in Poland